Making an experimental checklist with AI

A balm for absent-minded experimentalists

(This should not have taken this long)

Whether innate, due to the distractions of our age, or simply age, I am increasingly forgetful. This forgetfulness sometimes spills over to research, particularly into experimental work. There, researchers often work on -and must plan for- a long-winded series of steps to satisfy experimental validity and reviewer acidity. These steps are a hallmark of academic rigor and essential to scientific progress.

Which is why forgetting any of these is very annoying. Missing a manipulation check might require a weaker, post-hoc check that experienced reviewers will set their sights on like an old zebra straying from the pack; forgetting to clearly discuss experimental design or data exclusion details can easily confuse readers; failing to report reliability scores can cast doubt on the validity of a scale; and so forth.

Can we stop forgetting… with AI? Let’s try.

Project overview

Develop an experimental checklist with AI

🗒️ Topics: Research; Academia; Text GenAI

🔨 Tools: ChatGPT; Google Scholar.

💸 Cost: $0

⌛ Time to completion: No more than one hour; likely minutes.

Napkin planning

Let’s think before we tinker. Planning on a napkin, along with stating our goals, is always useful.

Goal: Develop a checklist so I don’t forget experimental steps.

Accuracy goal: High· – must include a comprehensive, logical set of steps.

Creativity goal: Low (it’s just a list).

Admittedly, there was not too much to plan, so this was just an opportunity to have fun making this doodle.

Here, the napkin emphasizes information sources. The obvious source to start when developing an experimental checklist is a proxy for my memory: my previous work, which I should remember (right?). The second source is existing work: optional, “exemplar” research articles that might contain useful steps that my work might not have used. The third source is ChatGPT’s extensive knowledge of the academic review process and our ability to constrain it to adopt the persona of an academic reviewer. By combining these sources, I expect ChatGPT to provide a checklist that I might consult every time I plan to run an experiment.

Doing It With AI

Sources

We start by gathering the information sources. I selected four articles I published in the past that relied primarily on experiments: Grigsby et al. (2022); Hsieh et al. (2025); Meng et al. (2021); and Zamudio et al. (2025).

The second source is up to you – in this case, I provided a few articles I’m familiar with: Connors et al. (2021); Khan and Kalra (2021); Perez et al. (2022); and Sevilla and Kahn (2014).

The third source (ChatGPT) is prompted in the next step.

The prompt

I wrote the following prompt for ChatGPT. While my prompts are usually much more structured and detailed, this one worked just fine:

I want to build a checklist for myself about things I need to do while setting up experimental research. This is so I don’t forget any steps.

For example, in writing: state the research goal, state the experimental conditions, provide a discussion, etc. From the methodological side: include manipulation checks, discuss stimuli development, etc. From the reporting side: report effect sizes, report sample sizes, report sample breakdown by gender, etc.

If I provide you with (1) PDFs of previous articles I’ve published and (2) exemplar other articles both of which have experimental studies, could you provide me with such a checklist based on the articles as well as your own contribution, if you were to pretend you’re a Marketing scholar from a prestigious institution who routinely publishes in the Journal of Consumer Research and the Journal of Marketing Research?

Note the following important points:

The goal: Here, the checklist requirement is clearly stated at the beginning, with the additional remark about reducing forgetfulness.

The “sections”: To provide an a priori structure for the LLM, I provided a very brief set of examples in writing, methodological, and reporting sections, which mirror the usual flow of a research article.

Explicit information sources: The three sources of information are clearly specified.

Persona prompting: I explicitly requested the LLM to “pretend” to be a reviewer for target journals I’m interested in. While the empirical evidence in favor of persona prompting is mixed, I generally use it with reasonable success (of course, ideally, you might want to experiment by ablation, adding/removing a persona prompt, and evaluating your own resulting checklist).

But it got weird, quick

You might imagine that this prompt, coupled with the papers supplied, would provide a pretty reasonable checklist for immediate use. Well, it did – sort of.

I first used this prompt with ChatGPT 5 back in October. At the time, I did not use any exemplar articles. ChatGPT produced a pretty good checklist and, after going back and forth a bit, I was able to coax it into creating a single-page checklist that I could then edit in Adobe Acrobat to allow it … well, to be checked. ChatGPT stated that it could create a short, compact version, and my response was:

Okay, let’s give it a shot. Figure out what format might work best for a single-page checklist. Perhaps two columns and landscape orientation?

The result was pretty good! (You can download the PDF below)

But then something strange happened. I came back in November to complete this post, and used ChatGPT 5.1 (which had now been released) along with the exemplar articles. The new checklist was… odd. ChatGPT first produced a very long checklist, which was both verbose and unspecific. I therefore asked:

Could you please produce a fillable PDF version? Let it be a short version to use before conducting an experimental study.

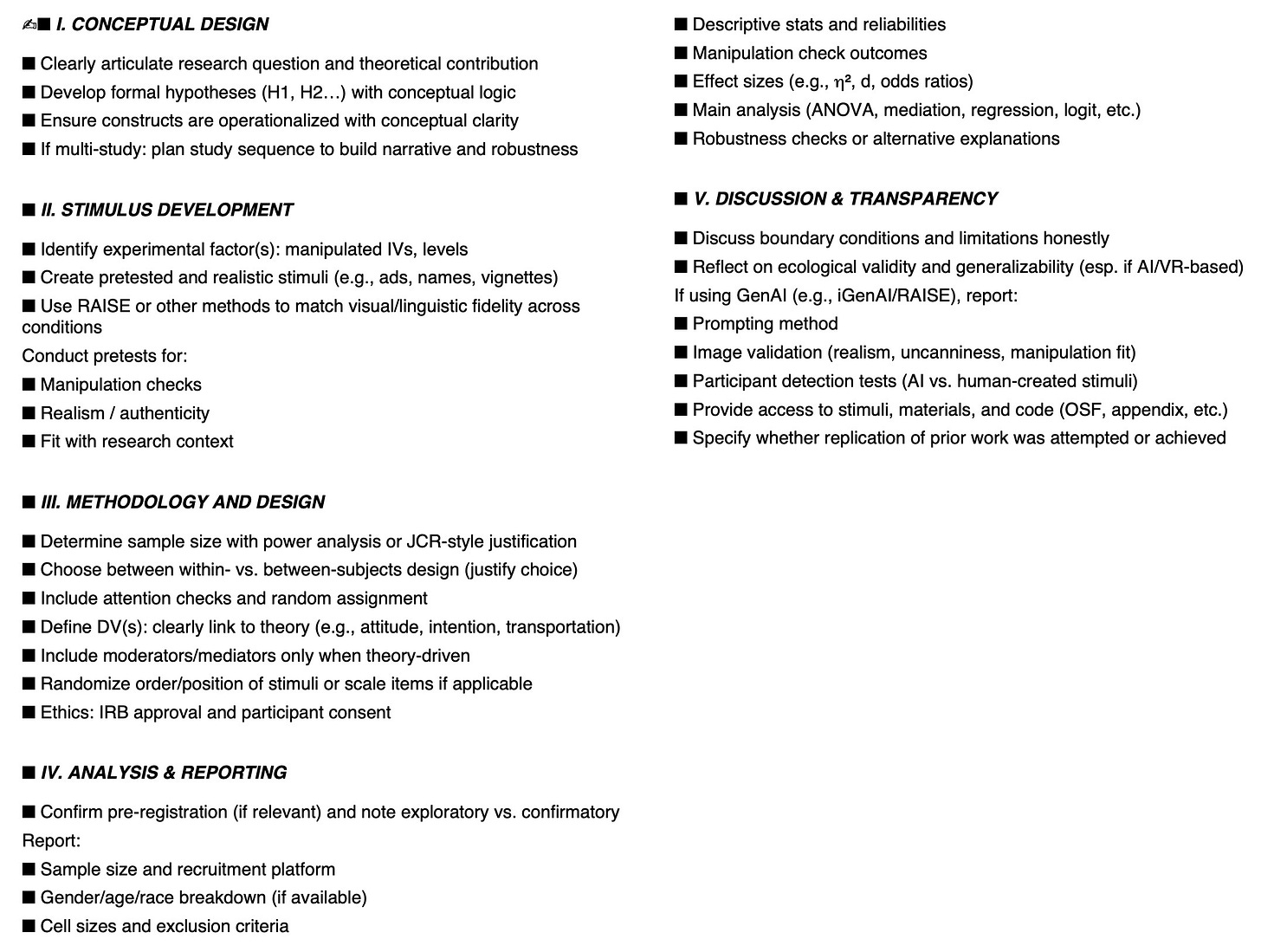

You can see the checklist and judge it by yourself:

I tried several times to improve on this substandard checklist, to no avail. The same approach on ChatGPT 5 Instant was a little better (but much longer, so a link is provided below).

I still preferred the original checklist. Going back to ChatGPT 5, even after removing the exemplar papers (which I suspected were a problem), the checklist was very wordy and had some tangential items:

The problem here is that with model updates, replicating a close version of the same work using the same prompt cannot be achieved. If this is the case for a simple checklist, I shudder to think what the vagaries of an enterprise-level AI implementation might output. Want that document you need for work? Well, not today! Try rolling back to the previous version of our AI model!

ChatGPT 4o to the rescue

When in doubt, I always fall back to ChatGPT 4o. It’s fast, it’s good enough for simple analytical work, and very good for creative work. So, I tried the original prompt with no exemplars and… it worked surprisingly well!

It’s not too wordy, it’s one page, and it seems to list what I need to remember.

What about Gemini 3?

The web version of Gemini 3 generated a checklist. It looked about the same quality as my two preferred ones above… except Gemini cannot export to PDF! Instead, it provided abstruse Python code that could be used to construct the PDF - clearly not what the average user should be expected to do. So, I skipped it.

Did We Do It?

Today, we constructed a quick experimental checklist for academic research. We built it using ChatGPT, which is widely accessible, and using a few research articles (in this case, mine). The old ChatGPT 5 produced good results, which weren’t replicable (to the best of my ability with ChatGPT 5.1/5. Fortunately, ChatGPT 4o was able to generate a similar checklist. Now I won’t have any excuses if I forget any part of my experimental workflow!

Oh! And DIWA, my faithful robot assistant, reminded me to tell you this: “For those teaching experimental methods or mentoring student researchers, having a reusable checklist template like this can be sanity-saving. Even if it’s AI-generated — just make sure to double-check it!”

Should you do this with AI?

This is a reasonably straightforward application, so I would say yes. While this example highlights that the output of AI applications can vary wildly (and we’re at the mercy of whatever new update is pushed by a few companies), figuring out which ChatGPT version to use was reasonably quick. At the same time, this example highlights the general problem of replicating AI-generated output. Solutions include a mammoth-sized template list (the original article lists many woes with inconsistent AI output) and esoteric methods that are not easily applicable by everyday users.

Speaking about predictability in the context of management, Gimpl and Dakin (1984) compared business forecasting to casting bones – superstitious behavior to maintain an illusion of control, particularly for Western managers. But, surprisingly, magical thinking sometimes provided a benefit, such as for Labrador Indians – paraphrasing the paper, bone-casting yielded mostly random maps that helped hunters avoid over-hunting particular areas.

Randomness in the context of AI output doesn’t provide these surprising benefits, however, since the goal is to produce consistently usable and reproducible output (perhaps less for image generative AI). Fickle, unstable, inaccurate, and immeasurably energy-voracious, we nevertheless boil our seas in reverence of this new machine god, which fittingly reminded me of H.P. Lovecraft’s description of “the blind idiot god Azathoth, Lord of All Things, encircled by his flopping horde of mindless and amorphous dancers, and lulled by the thin monotonous piping of a daemoniac flute held in nameless paws.”

But hey! We made a checklist!